Uniform blog/Blazing Fast Edge-side Personalization for Sitecore XP

Blazing Fast Edge-side Personalization for Sitecore XP

Blazing Fast Edge-side Personalization for Sitecore XP

With Uniform, Sitecore Experience Platform (XP) customers can leverage XP's personalization capabilities without replatforming Sitecore Experience Manager (XM), while using the CDN of their choice. However, many organizations have found the personalization capabilities on the Sitecore Experience Platform (XP) challenging to use. Why? At the outset, close collaboration among technical and business users is a must, as are well-defined nonfunctional requirements like performance and scalability. Also problematic are business-related roadblocks. To head off those issues, an understanding of how to ensure that the technology involved can meet the demands of delivering contextual content globally is critical. Along with many colleagues at Uniform, I’ve spent years partnering with some of the most accomplished teams worldwide to build cutting-edge solutions on Sitecore. A pattern stands out that explains why quite a few well-established brands struggle in implementing personalization: server-side personalization is slow, expensive, and unscalable. This post delves into our journey of applying edge-side personalization for Sitecore, including the discovery process that led to that approach. The story will, I hope, resonate with you.

Importance of performance

Performance trumps content and its presentation as the most important factor that influences visitor engagement. Slow sites cause visitors to bounce before they even engage with the content.Besides, since Google now emphasizes site performance as a key criterion for determining search results, failing to raise it early and often might drastically lower your SEO ranking. Customer and market expectations change faster than digital experience platforms (DXPs) can adapt so don’t assume that your costly "enterprise-grade" DXP can overcome performance challenges. Reality is, cloud services and edge computing continue to become more robust and accessible, opening doors to blazing-fast, scalable personalization that’s decoupled from your DXP’s complex infrastructure footprint, skyrocketing costs, sluggish performance, and limited scalability. A more effective approach is paramount. Below are the results of years of Uniform’s R&D into how to deliver the fastest possible personalized Sitecore sites. Consequently, Uniform has been helping Sitecore customers globally to generate superfast page loads regardless of the amount of scaling and caching configured on your Sitecore content-delivery (CD) instances.

Three components for personalizing webpages for Sitecore

Personalizing sites for Sitecore involves three moving parts:

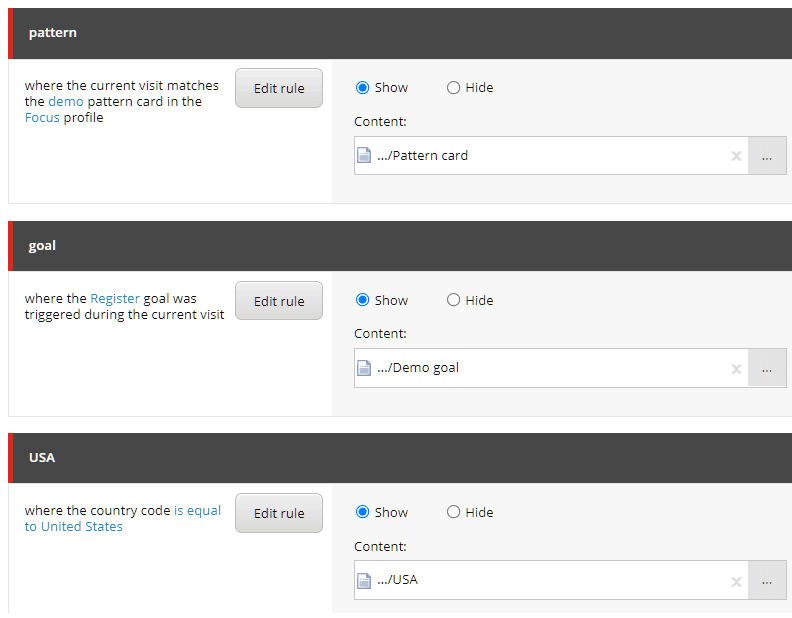

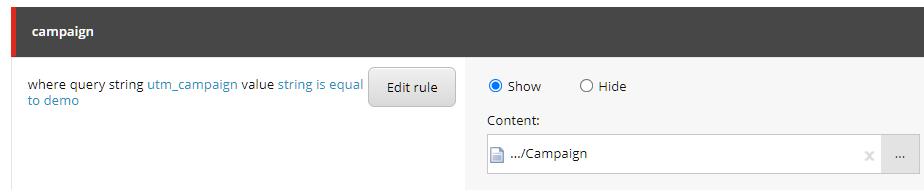

- Configuration With the Sitecore Rules Editor, business users define the rules associated with a component on a page, specifying how to personalize the component for audiences. Sitecore stores the rules in a field on the page to which the component is assigned.

- Execution When a visitor views a page with personalized components, the CD instance runs the rules assigned to them, i.e., personalizes the page, as part of the standard rendering process.

- Context The context is the data, such as information on the current session (e.g., a campaign) and the visitor (e.g., the individual’s ID), through which the personalization process determines how to customize a component for a visitor. The context is maintained by the Sitecore tracker, which runs on the CD instance. Upon the visitor’s return to the site, this data is rehydrated from xDB and kept in the out-of-process session, typically backed up by Redis.

In essence, configuration + execution + context = personalization.

Personalization process

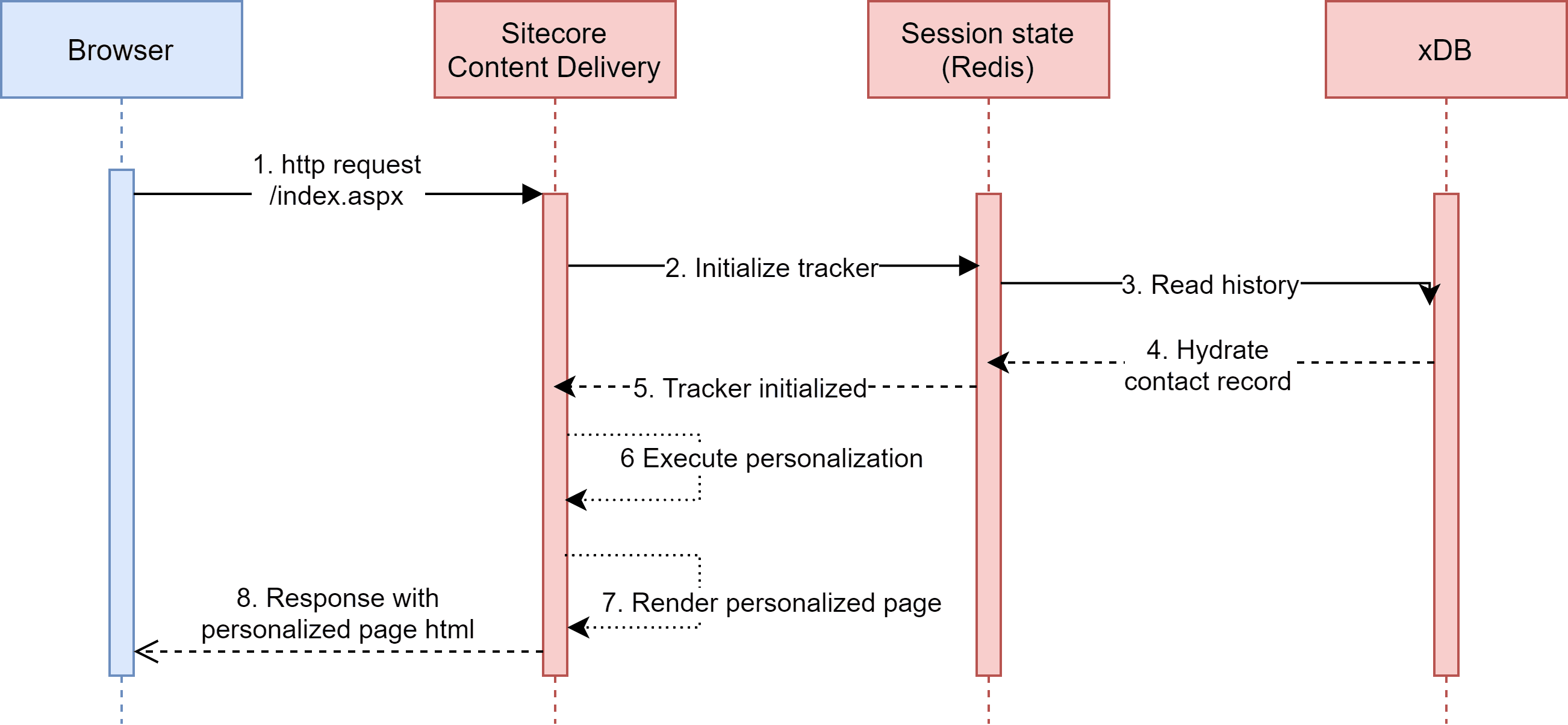

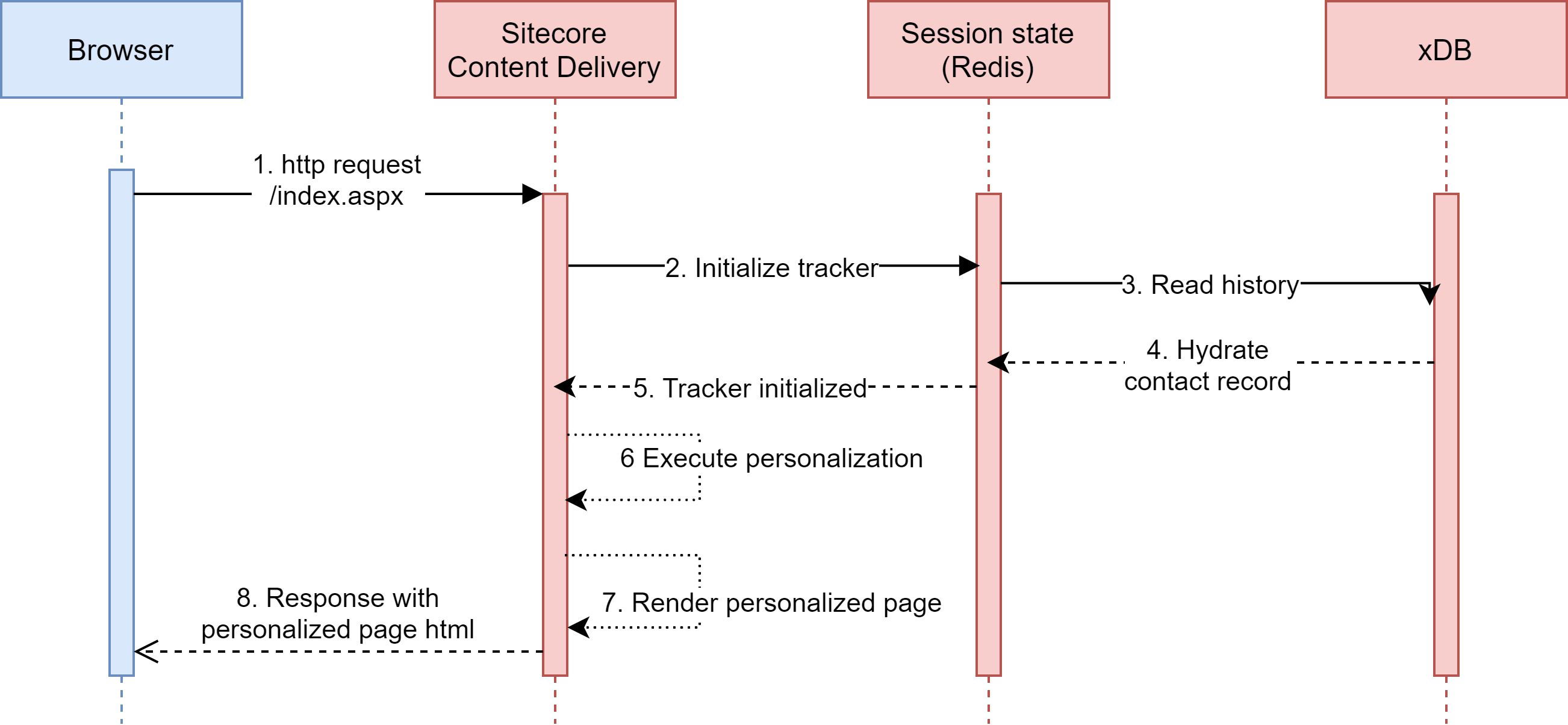

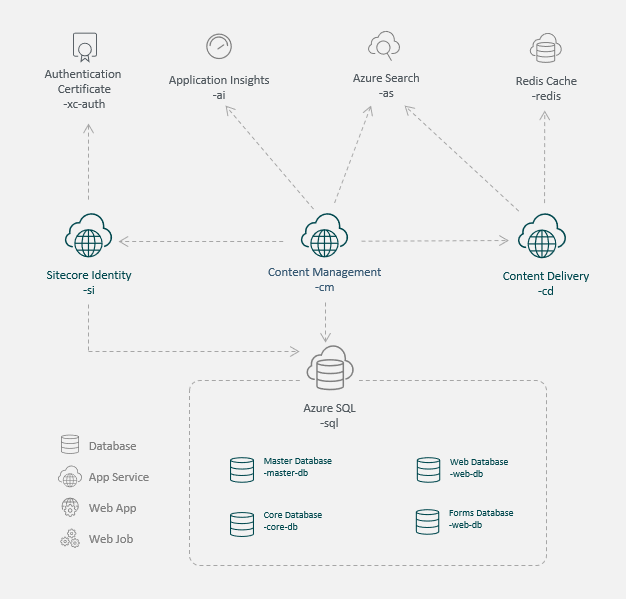

Besides the Sitecore CD instance, other actors play critical roles in executing personalization rules. The simplified diagram and description below explain the essential components of origin-based personalization.

Note: The term “origin," which comes from architectures that cache content through content delivery networks (CDNs), aka the “edge,” refers to the location from which CDNs acquire the content for caching. Upon receiving a request for a page not already in the cache, the CDN solicits that page from the origin. Subsequently, the CDN can handle all requests for the page, delivering them much faster than the origin.

- The browser sends an HTTP request to the Sitecore CD instance, which is running in a data center. Also, the browser identifies returning visitors by including a cookie in the request with other context data, such as the visitor's IP address, which is available to the CD instance upon execution of the personalization rules.

- The CD instance creates a server-side data object that represents the page context, populating the object with context data from the HTTP request. The instance also initializes the session, which stores the visit data. For returning visitors, the instance retrieves the contact record from Sitecore xDB and adds it to the session.

- The browser executes the personalization rules according to the context data, pinpointing the components and content that make up the requested page and starting Sitecore’s page-assembly process on the server by rendering page markup.

- The CD instance creates an HTTP response, which includes the resulting markup and a tracking cookie to identify the visitor as a returning patron, as applicable, and sends the response back to the visitor's browser.

- The browser renders the page as a personalized experience for the visitor.

The hallmark of origin-based personalization is that it occurs on an application server—the CD instance in the case of Sitecore.

Architectural considerations for scaling

This section answers a popular question: How do I scale a solution that requires origin-based personalization?

CDNs

An issue with origin-based personalization is that CDNs are not an option, i.e., personalization works only if the origin can execute it. Thus, you cannot cache personalized pages on the CDN, and all requests for personalized pages must always go through the CD instance.

Scaling of CD instances

Besides page rendering, CD instances usually also handle API requests, search queries, cache invalidations on publish, and background tasks. The more tasks for a CD instance, the fewer requests it can handle. Assignments like running of visitor session state and personalization in addition to the instance’s other duties inevitably leads to the need for scalability. Even though horizontal scaling and vertical scaling are feasible, they are no silver bullet due to the following:

- Cost Scaling of CD instances raises operational costs, which are unpredictable and liable to add up quickly.

- Cold startup time When scaling out, i.e., horizontally, you add CD instances to your architecture. Even though that’s practicable if certain performance thresholds are met, it takes time for new instances to be able to handle requests—a condition called cold startup time. All too often, after a new instance joins the mix, visitors who are routed to that instance experience significant performance degradation while the instance warms up, which can take minutes.

- Effectiveness Even though scaling up CD instances, i.e., virtually, causes them to run faster, you are limited by the hardware available. In general, you can handle more visitors—but not raise performance—by scaling out.

Session state

Managing, usually through Redis, out-of-process session state, a critical attribute of personalization, frequently causes scaling problems with most systems, not just Sitecore. That’s because session-state management adds extra input and output (I/O) operations, two of the most time-consuming computing processes. Plus, this layer is generally "hot," i.e., very active. You must provision adequate service tiers to prevent it from becoming a bottleneck that raises infrastructure and operational costs.

Network latency

Bandwidth poses a significant effect on network latency, which is the amount of time it takes for data to physically move from one system to another. Even if infinite bandwidth is available, data movement across a network is limited by the law of physics. In terms of global distances, that speed restraint can reduce performance considerably. Two areas in personalization are particularly problem prone vis-à-vis network latency:

- Between the visitor's browser and the CD instance that services the request.

- Between the CD instances and xDB.

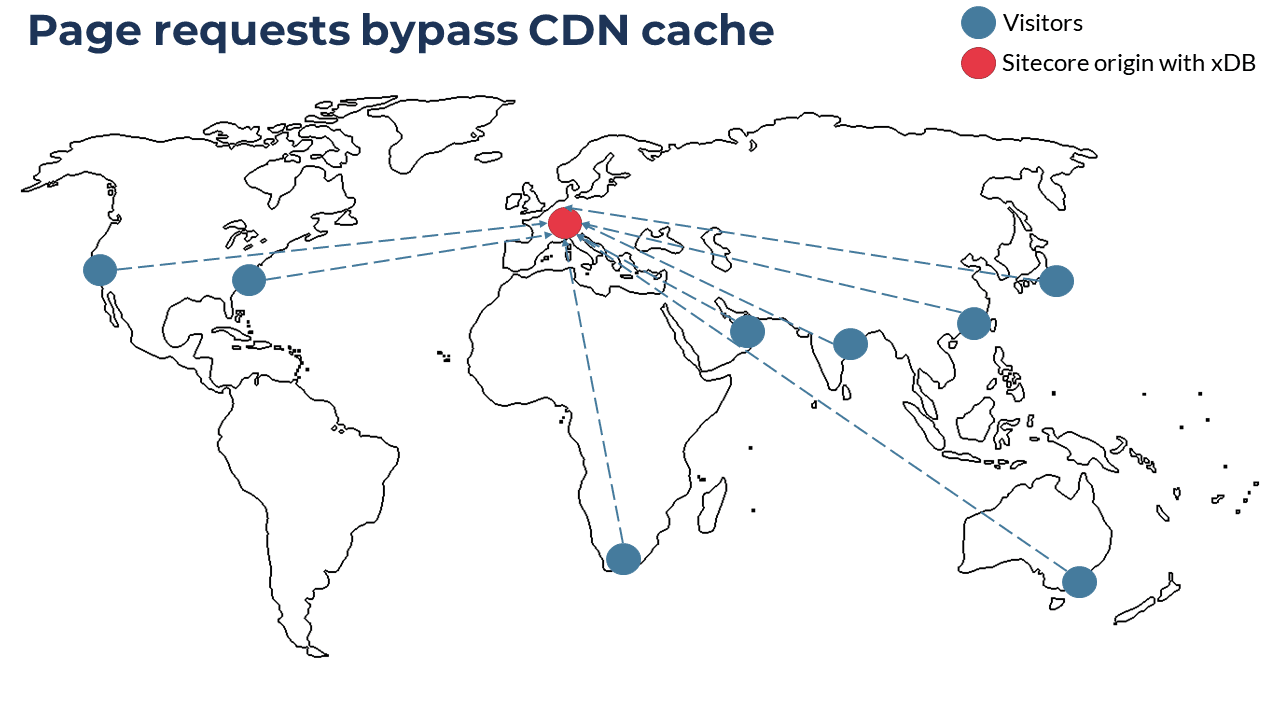

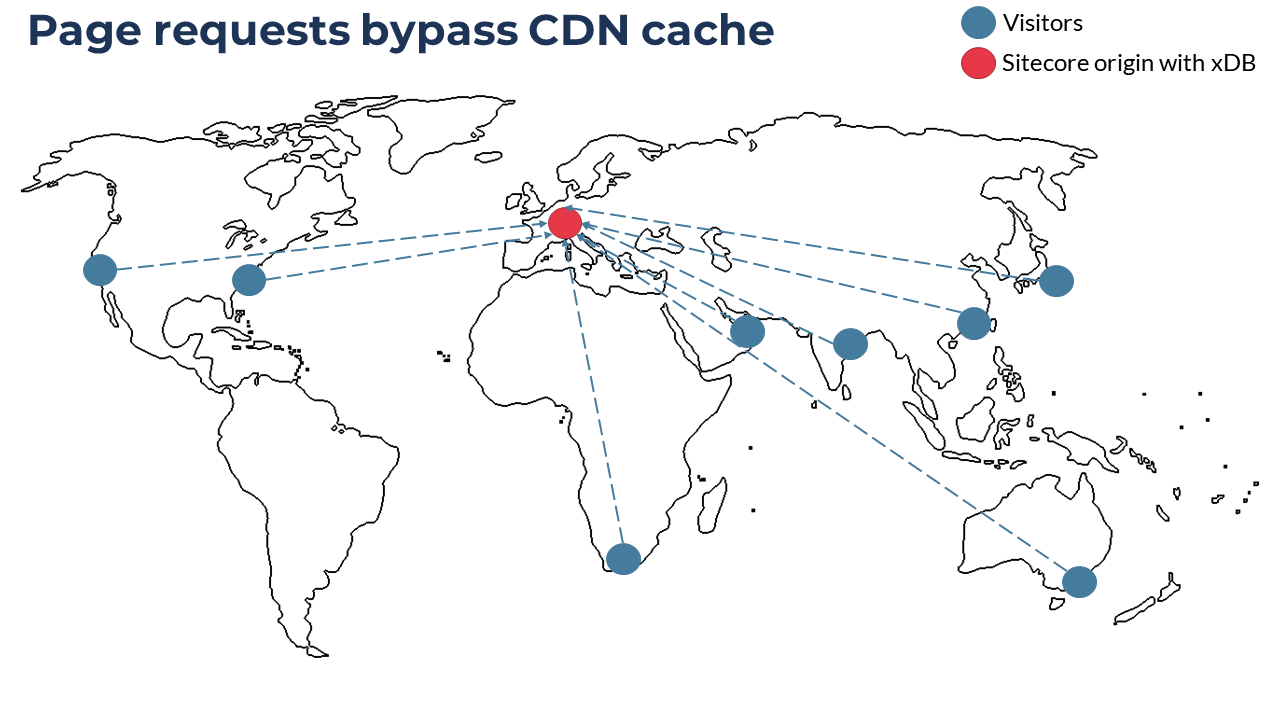

Before, you could reduce the latency between the visitor's browser and the CD instance by deploying clusters of instances in various regions. Despite the high expenditure involved, a number of businesses went along. However, complexity results from scaling with Sitecore’s origin-based personalization feature. As part of that capability, xDB is a single origin type of system, not distributed out of the box. Even though, with the recent release of Sitecore 10.1 and SQL Server’s Always On availability groups, you can scale out the xConnect read replicas, doing that further raises the cost and bloats the architecture, let alone that you must upgrade to Sitecore 10.1. The writes must still go to a centralized instance. So, to keep up with the demands of global traffic, most implementations stay confined to a single region, at least on Sitecore XP. Even if you geographically distribute the CD infrastructure and assume the added cost and complexity, a bona fide global XP platform is likely to remain an academic topic.

Implications

The diagram below illustrates the realities of single-origin deployment, which occurs in West Europe through Azure, for a global audience. Visitors request for personalized pages from around the world.

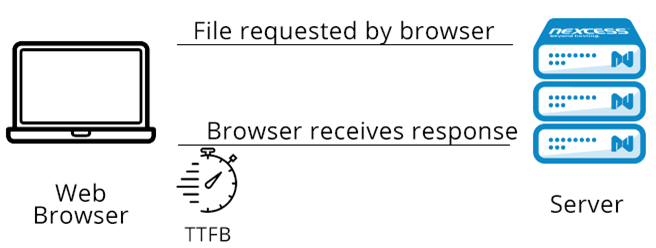

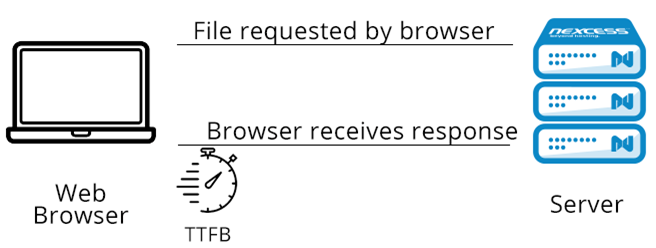

Performance measurement by means of Time to First Byte

Scaling and performance-tuning your Sitecore architecture causes the CD instance to return a response to a visitor's browser faster, as measured by a metric calledTime to First Byte (TTFB), i.e., the amount of time it takes for the visitor's browser to receive the first byte of data from the CD instance. TTFB also measures the latency of a round trip to the CD instance. Note: As of 2021, TTFB was usually between 100-500 ms. Google, which leverages a site's TTFB as a factor in determining search results, suggests a goal of 200 ms.

Relationship of TTFB and personalization

Except for performance nerds glued to the network view of their browser's developer console, most visitors don't care about TTFB. After all, fast TTFB alone does not guarantee intuitive UX and high SEO ranking. However, TTFB is the foundational metric that affects otherCore Web Vitals. More important, it’s the one metric directly affected by origin-based personalization, which takes into account both network latency and the cost of the additional personalization-related I/O: instantiating the context, executing the rules, and rendering the page.

Effect on UX

While navigating a site, you might’ve seen a blank page that shows up for a while. That page is one with slow TTFB. The browser cannot display content until after downloading the HTML document and other resources (CSS, JSS, images) for parsing and execution.

As an illustration, I did the following:

- Spun up a vanilla Sitecore XP single deployment in Azure PaaS in West Europe. Since no loads are necessary on the CD instance, no scaling is required.

- Installed a simple MVC site, configured campaign-based personalization, and ran a session from Sydney, Australia on a broadband network through webpagetest.org.

Inasmuch as that’s an extreme scenario in terms of distance between the browser and the origin, it clearly shows the effect of slow TTFB on the UX:

That’s a test conducted in a controlled environment. In the real world—

- Fast mobile connections are not evenly distributed globally because variables from mobile networks would complicate the mix.

- The vanilla example above only serves a simple page. Real-life solutions would incur the additional cost of executing request-pipeline customizations, running costly data access and logic as a part of the rendering, maybe even performing additional I/O like fetching data from a remote search index. You’d normally mitigate all that with HTML caching, but that’s no cure-all.

- Upon publication of new content, the corresponding Sitecore cache is invalidated, significantly affecting TTFB due to cache clearing, which in some cases ends up voiding the whole cache with HTML caching. The more you publish, the more drastic the effect on TTFB.

- New deployments recycle the state of CD instances. On larger systems, it can take minutes for the instances to warm up, during which they cannot handle traffic.

- A traffic spike leads to either of these scenarios:

- If you are not provisioned to handle the traffic volume, visitors will experience slow page loads and even timeouts.

- If you have auto-scaling turned on, the scale-out process will start, causing more instances to enter the pool to serve traffic. The effect is the same as when you deploy a new CD instance: It takes time for new instances to initialize and warm up, resulting in no page display for visitors.

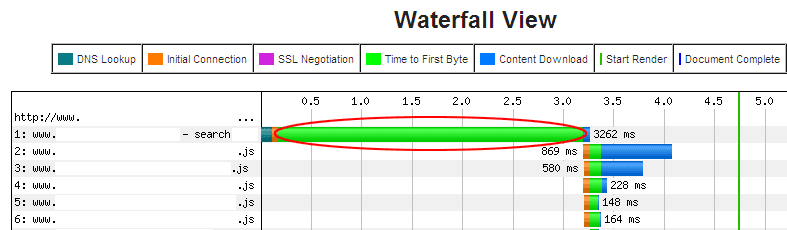

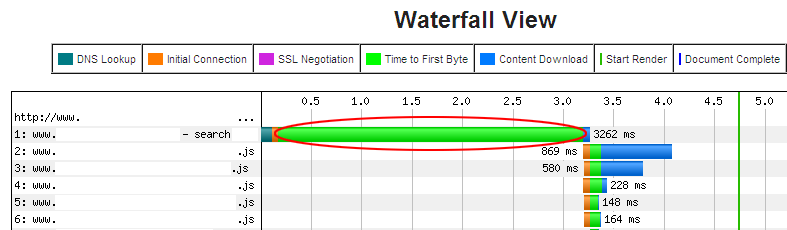

You might have heard of a subsecond page-load time. If you would like to achieve sub-second page load time, this is often impossible due to the barebones html page not loading fast enough which will block the rest of the waterfall from completing in under a second. If you tackled troubleshooting of slow response times from CD servers before, the picture below is likely familiar, with the blank screen being displayed for longer than three seconds:

Credit: websiteoptimization.com.

All the data points described above clearly suggest that personalization needs a different approach to meet modern performance expectations.

An alternative approach

This section shines a light on an effective approach as an alternative, and explains why Uniform opted for edge-side personalization.

Requirements

The alternative approach must meet three requirements:

- No rebuild, upgrade, or replatforming. Given customers’ sizable investments in Sitecore XP, it’d be abominable business practice to insist that they rebuild their site, upgrade their environment, or, worst of all, switch to another architecture or platform.

- No changes for business users. A major reason why customers buy Sitecore is that it offers effective tools for business users, whom Sitecore trains on how to use them. Any alternate approach must preserve that value, i.e., regardless of whether they use Content Editor or Experience Editor, business users must be able to configure personalization with the Sitecore Rules Editor without leaving their authoring environment.

- Support for complex personalization scenarios. The approach must be compatible with complex personalization strategies, such as those on visitor behavior; customer data from outside xDB, e.g., a DMP or CDP; and historical visitor activity.

Problems of origin-based personalization

From the right side of the sequence diagram, edge-side personalization is similar to the standard approach. Even though faster TTFB applies to the static HTML page, that page contains no personalized content, which you must fetch client side. Hence, the same TTFB characteristics will apply to those requests for the personalized content. Accordingly, decoupling personalized content from page rendering has no effect on the personalization process upon execution of the Layout Service API. All the limitations of the origin-based process still apply.

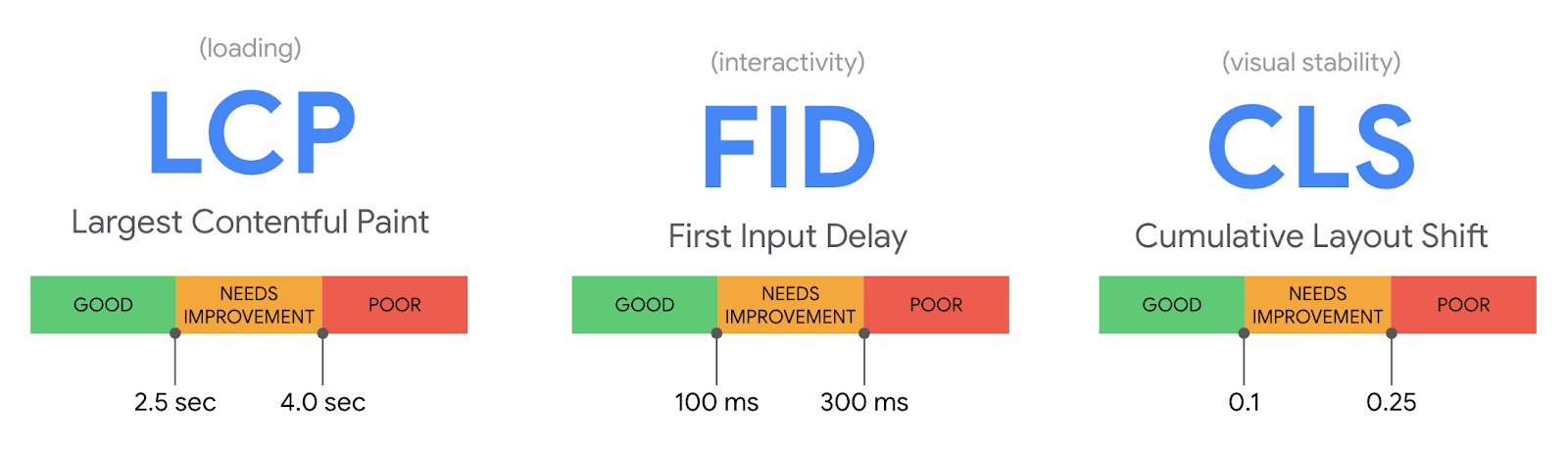

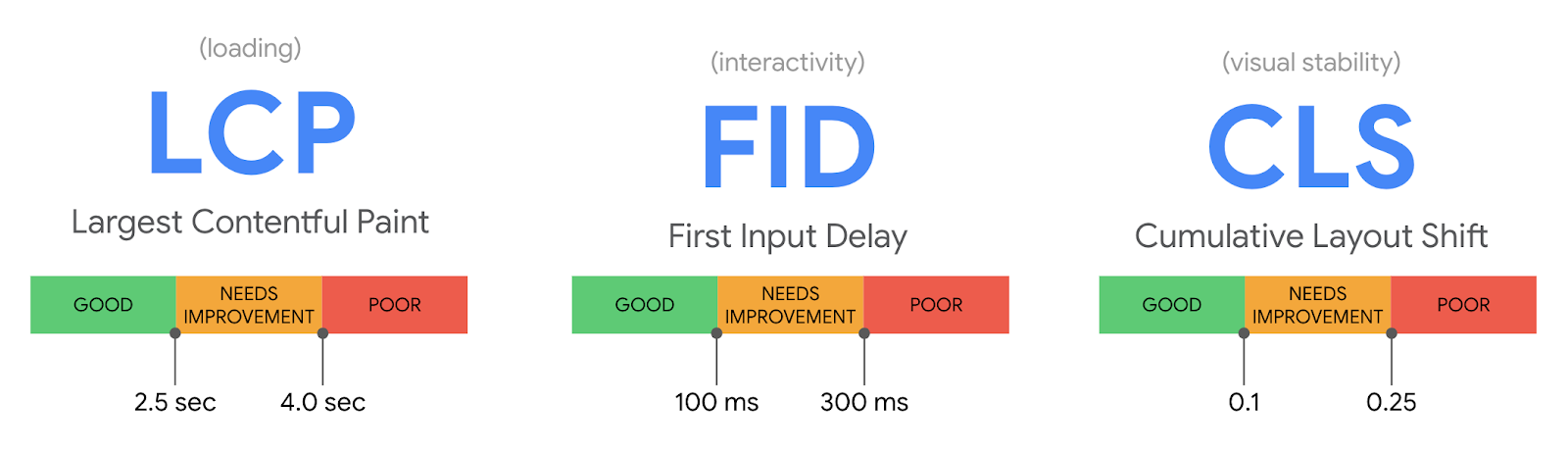

Negative side effects on Core Web Vitals

Several unintended consequences from the alternative approach might affect your Core Web Vitals.

First and Largest Contentful Paint metrics

All the components that support personalization require logic to handle its loading state. Since the personalized content is not known at buildtime of the static portion of the site, you cannot display that content to visitors until the site is downloaded, the JavaScript is parsed and executed, the API call for the personalized data has occurred, the response is received, and the component is rerendered. Depending on myriad factors, those steps could take seconds, especially if your web application is large, the device is slow, or the remote API endpoint is sluggish in response. As easy as it is to personalize a footer, individualizing an element above the fold, which is common because that’s where customization can have the greatest effect, requires careful consideration. Depending on your site, such personalization could result in a highFirst Contentful Paint (FCP), a high Largest Contentful Paint (LCP), or both. As a resolution, display a content placeholder, which requires programming of a unique loading state per component. Naturally, that takes developer effort, let alone that it won't win you UX awards.

Cumulative Layout Shift

Another challenge is theCumulative Layout Shift (CLS), an important user-centric metric for measuring visual stability, which quantifies how often visitors experience unexpected shifts in the page layout, such as components jumping around during page loads. A low CLS score ensures a first-rate experience for visitors.

Furthermore, as one of the Core Web Vitals, CLS is a key metric likely to be negatively affected by page updates after a download of the original content. Since the height of the page for a personalized piece of content varies and is not under developer control or up for prediction, addressing the CLS issue is especially tricky.

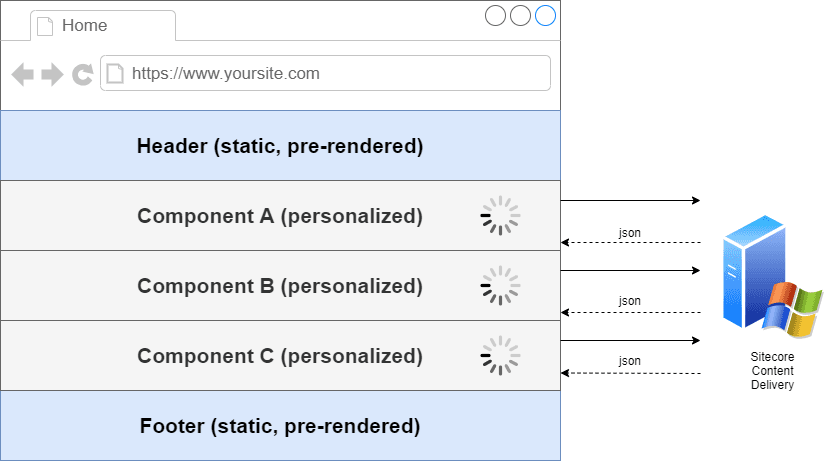

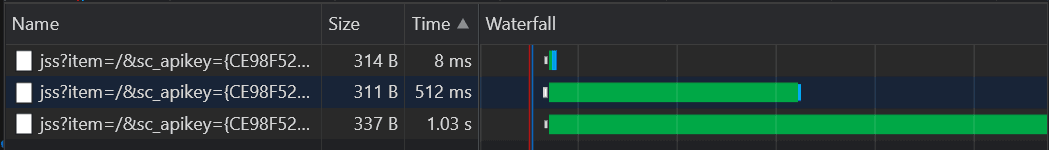

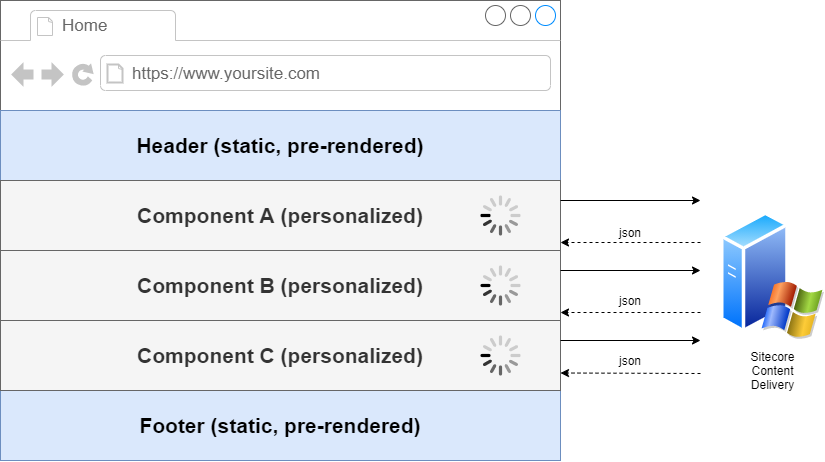

Session-locking and multiplaceholder personalization

Consider a scenario of personalized components bound to different placeholders. You must then make three different requests to the Layout Service API, which will not process them in parallel due to session locking on the CD instance. Instead, they are queued on the CD instance, creating a scenario whereby the request for placeholder A must complete before the CD instance processes the request for placeholder B. Ditto the request for placeholder B before the instance handles the request for placeholder C.

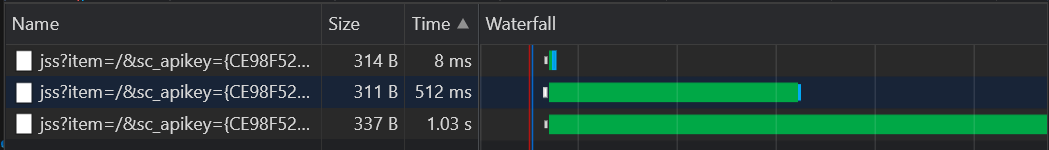

That default behavior, not specific to JSS, has been around for a while and is well known within the Sitecore community. For details, seethis excellent post by Jeroen de Groot and this Knowledge Base article by Sitecore. Below is a demo of the process on a vanilla local Sitecore XP 10.1 instance with a Sitecore JSS React app deployed and no throttling of the network.

Here, the TTFB rises with each Layout Service API request, the third one taking over one second—from a local instance. The more API and AJAX calls your system makes, the more taxing this approach is.

Notwithstanding that making only one request for the whole layout instead of individual requests resolves the issue to an extent, doing that fetches more data than your front end needs and generates a heavier workload for the CD instance. You cannot request personalized data for an individual component with out-of-the-box APIs. A way out is to add customizations so that the session state is read-only on the API controllers. However, side effects from those customizations might negatively affect personalization and overall stability of the solution.

Drawbacks

Even though this alternative approach delivers customization without the render-blocking that accompanies traditional Sitecore page delivery, the underlying architecture still depends on origin-based personalization. High TTFB for the async request for personalized content could affect the UX, depriving you of the opportunity to engage with the visitor who scrolled away or closed the browser tab after giving up on waiting for personalized content to load. In addition, the scaling challenges inherent in xDB remain. For an answer, we turned to the exciting world of serverless edge computing. For details on that topic, read these two articles: one byAkamaiand the other byCloudflare.

Edge-side personalization

Though a bottleneck that defies resolution, the CD instance must stay in place because execution of personalization rules must occur there. As for moving the execution of the personalization instructions off the instance, defining the process and location to which to move the instance is a prerequisite.

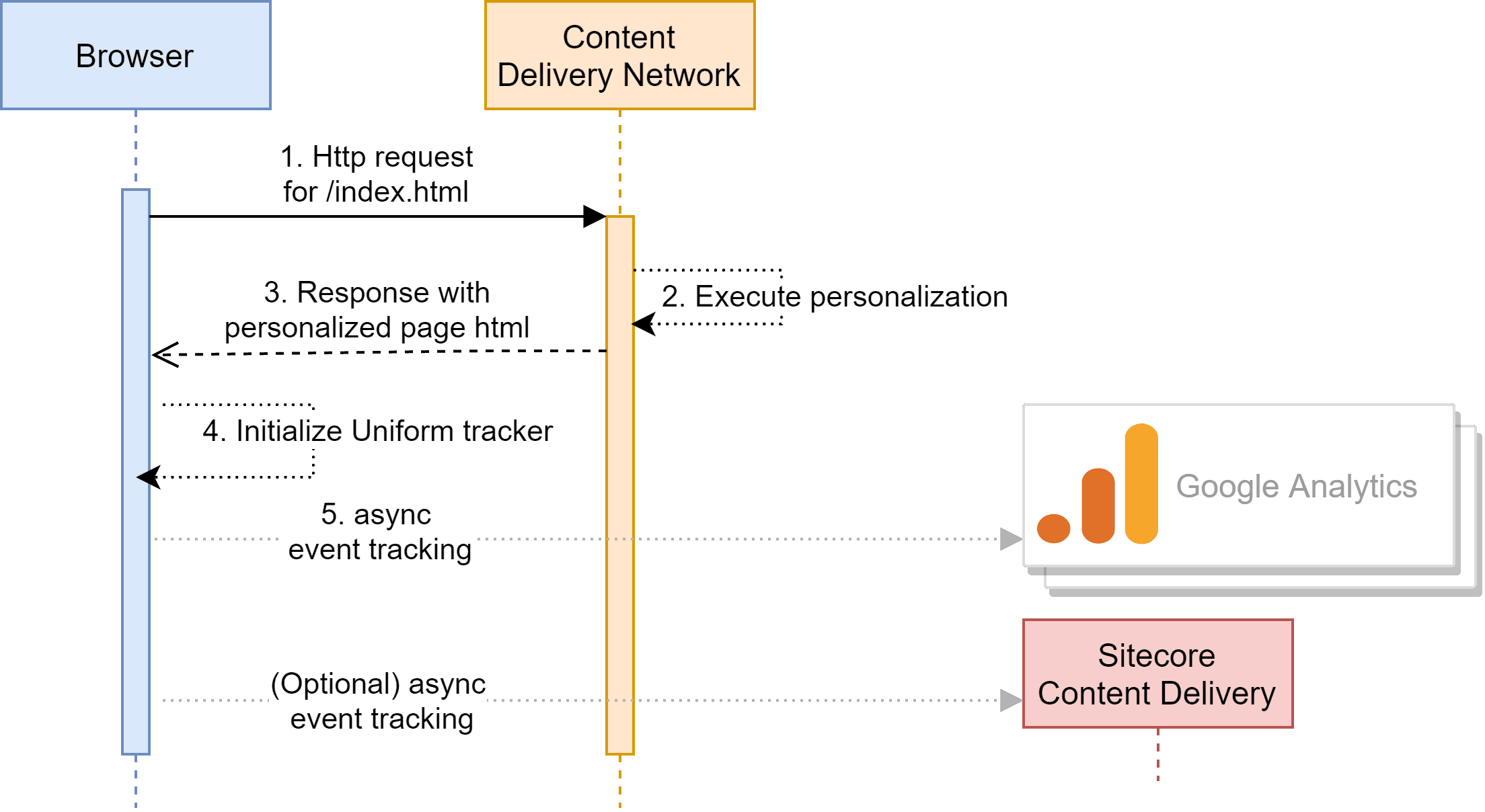

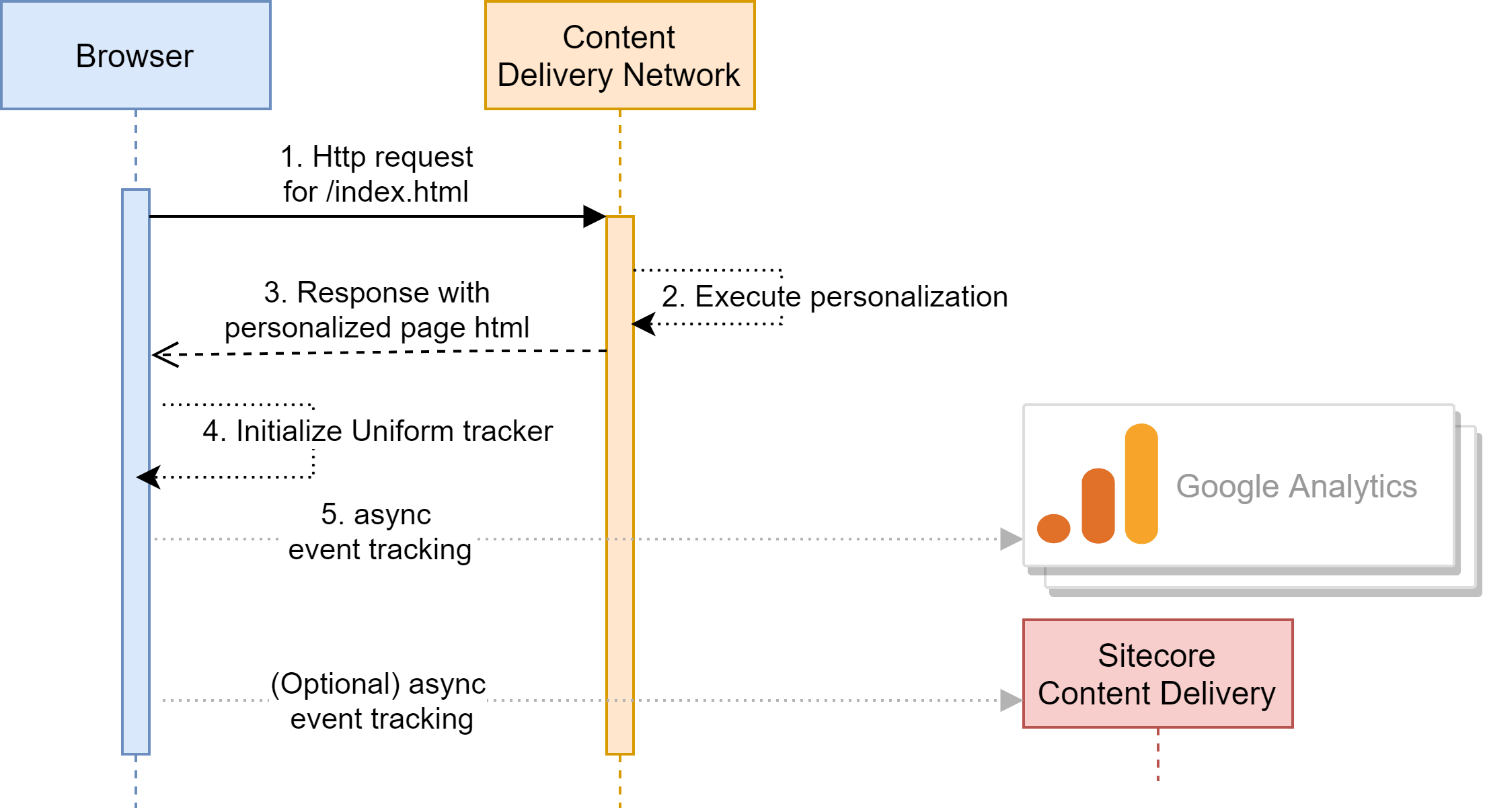

Regarding the process, recall the three layers of the personalization process: configuration, execution, and context. A bullet in the section on requirements above mandates no noticeable changes for business users. Therefore, moving the task of configuring personalization rules off of Sitecore is not an option. What about the other two requirements? That is, must personalization rules run on the origin, and must business users manage the context on the origin only? The answer is no. Next, move the rules "to the edge,” which is your CDN. Rather than just caching files, CDNs nowadays offer an entire edge-compute layer, addressing all the performance, scaling, and network-latency issues related to content delivery from Sitecore XP. That’s exactly the approach for the Optimize capability of Uniform for Sitecore, a product based on years of R&D and hands-on engagement with some of the world’s largest and most complex Sitecore installations. Optimize enables business users to continue to configure personalization as before, offloading everything related to running personalization to the edge. This diagram shows the two-step process:

- Page caching takes place on the CDN in a prerendered state that includes the personalization rules configured with Sitecore by the business users assigned to the pages.

- Uniform passes the context for executing personalization along with the HTTP request—with no need for calls to the origin (CD instance)—in order to send the browser a personalized page.

Analytics

The Uniform tracker that runs on the client captures analytics, i.e., the same activity collected in xDB when the CD instance tracks profile scoring, pattern matching, personalization events, etc. Also, the Uniform tracker can dispatch analytics to different systems, notably Google Analytics, which is what most customers prefer, unlocking data like personalization activity that was previously available only in xDB. Customers who want analytics captured in xDB can send that data to xDB with the Uniform tracker. Remarkably, analytics capture by Uniform occurs without the CD instance having to render pages, significantly reducing the load on the CD servers and lowering their operational cost.

Benefits of decoupled personalization

Decoupled personalization yields three important benefits:

- Reduction of your CD infrastructure by avoiding origin calls to prerendered pages. In fact, you can literally disable your CD infrastructure for those sites, reaping a TTFB in the 50-100-ms. range, two to four times faster than Google’s recommendation.

- Instant, automatic, and reliable scalability. The elasticity of traffic spikes is handled by your CDN, which scales fast and mechanically—not by the Sitecore CD infrastructure, which cannot do that.

- Low network latency. This benefit is courtesy of innumerable edge nodes like Points of Presence (PoPs) worldwide, which serve content as close as possible to visitors. Since the laws of physics apply even in cyberspace, data takes time to travel around the world. To reduce network latency, Akamai leverages 4,207 PoPs, and Cloudflare's CDN covers200-plus cities in over 100 countries.

Uniform’s optimize and deploy capabilities

Decoupled personalization is compatible with sites that take advantage of both the MVC—with or without SXA—and JSS presentation-layer technologies. A major requirement is the Jamstack architecture, which is right up the alley of Uniform for Sitecore. Uniform for Sitecore offers two main capabilities:

- Optimize: for decoupled tracking and personalization.

- Deploy: for application of Jamstack to sites built with any of the presentation-layer technologies mentioned above. Amazingly, you can actually gain the benefits of Jamstack with your MVC sites.

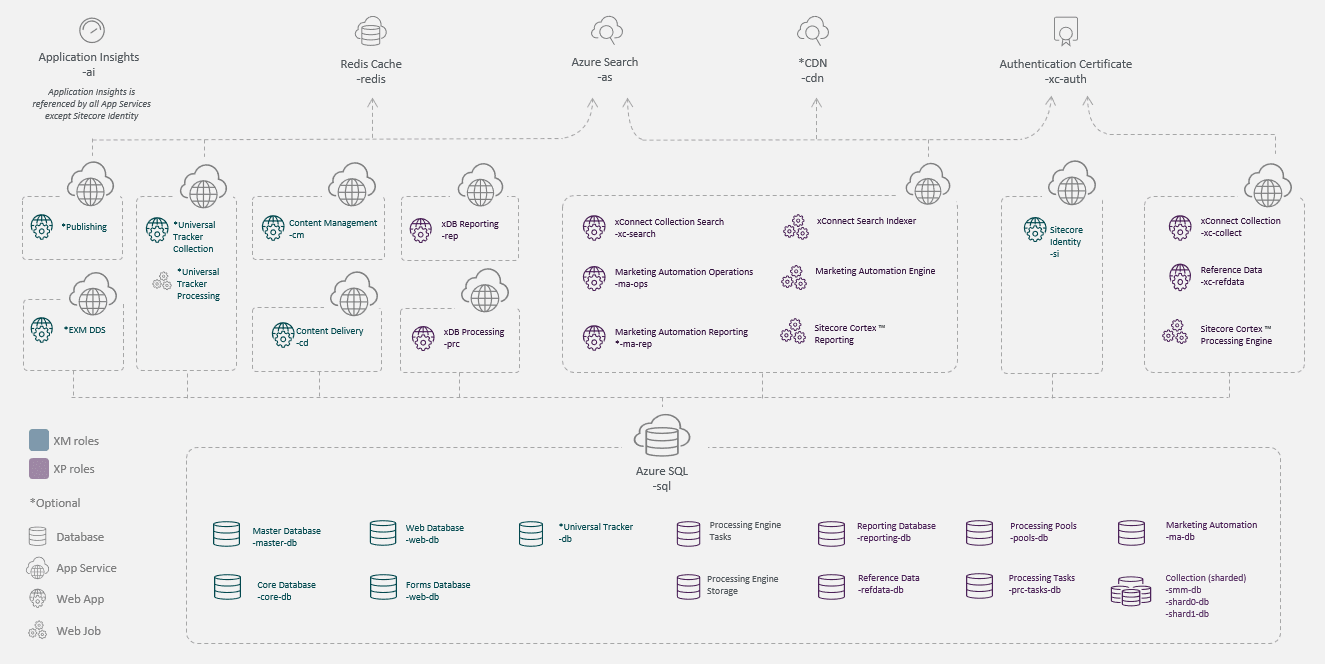

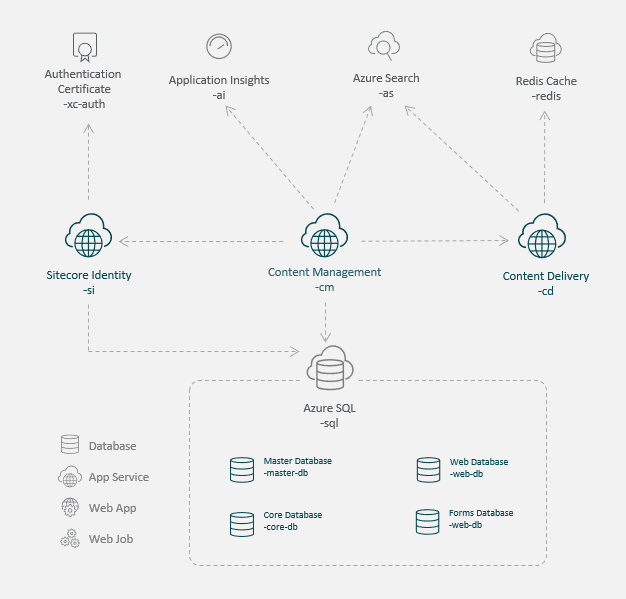

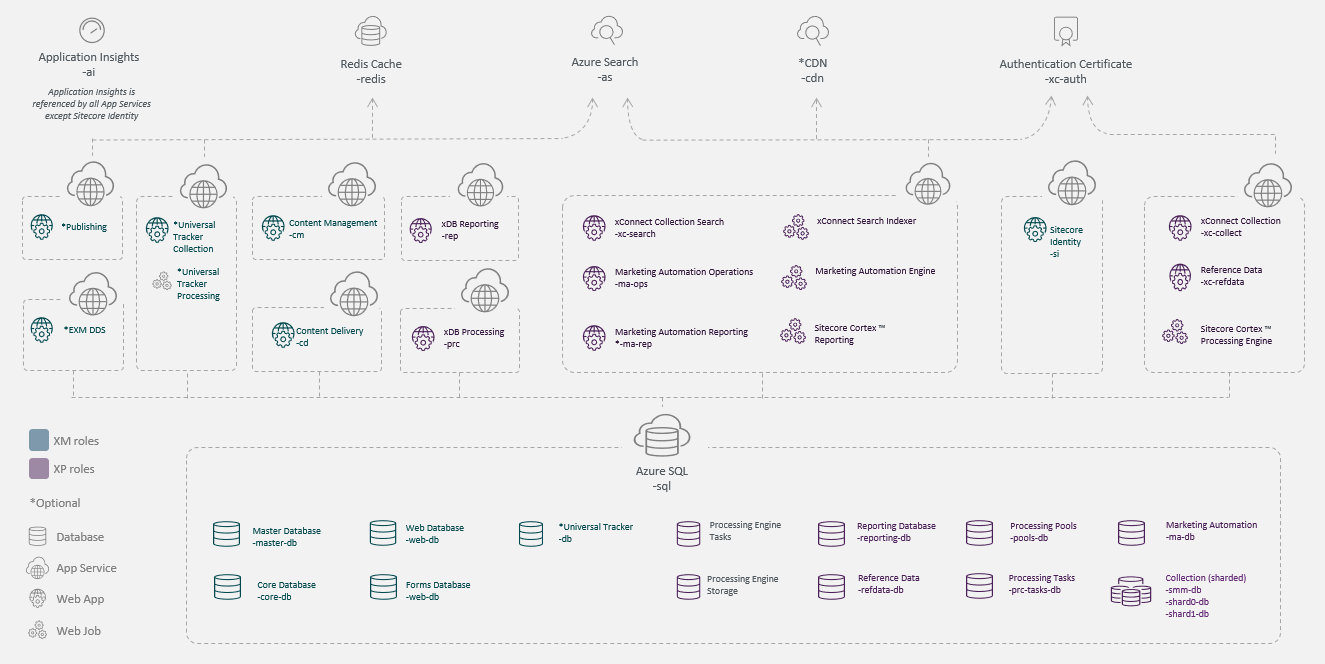

Personalization for Sitecore CM customers

Finally, decoupled personalization, being compatible with your Sitecore XM license and topology, does not require Sitecore XP. The footprint of your Sitecore infrastructure is then a lot smaller, so much so that you can transition from the XP-scaled topology, as shown below—

—to the XM-scaled topology:

For details on Sitecore topologies, see the related documentation.

Edge-side Personalization in Action

Edge-side personalization in action

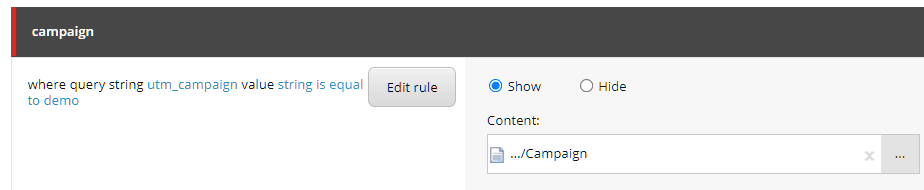

Let's take a sample MVC site for a spin and observe its performance. Akamai is the CDN here, but you can expect similar results on Cloudflare. The simple use case personalizes based on the UTM campaign in the query string below, adding it to the HTTP request that the browser creates:

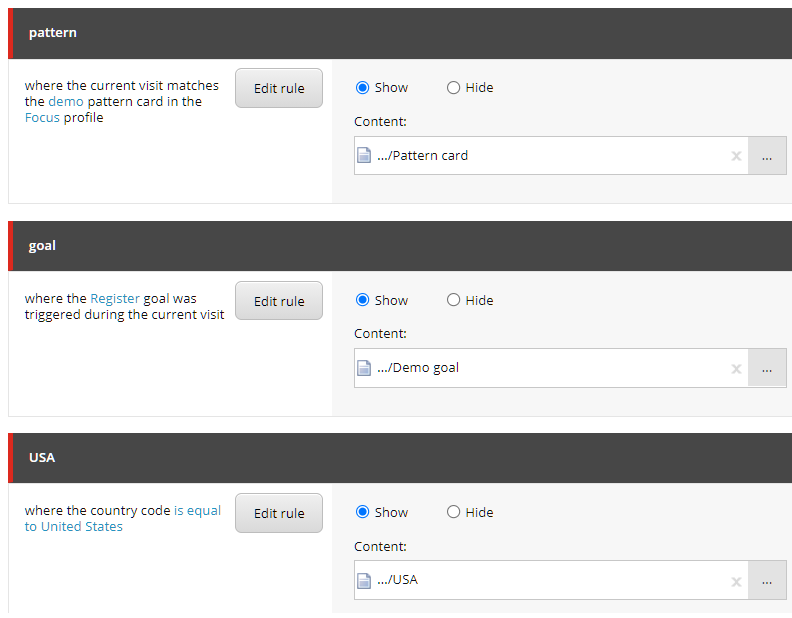

<https://yoursiteonedge/?utm_campaign=demo>The personalization rule looks like this in Sitecore:

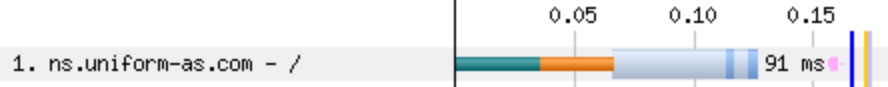

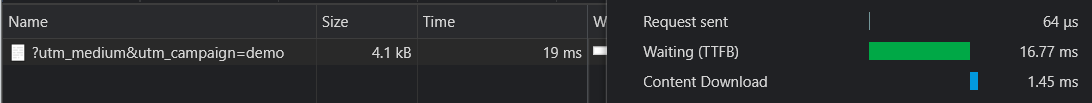

After publication, the page is available on the CDN. Make a request through webpagetest.org.

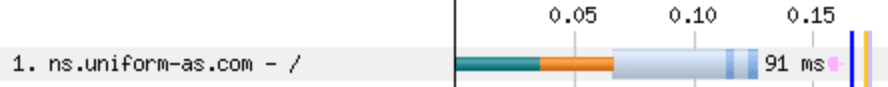

The time as shown (rounded up to 0.2 second) is the page-load time, more than 10 times faster than origin-based personalization. That’s all it takes to complete these tasks: the browser’s establishment of the initial network connection to the CDN , the DNS handshake, TTFB, download of the HTML page, and parsing of the JavaScript code in the main thread. The TTFB usually stays under 100 ms., as shown in this report.

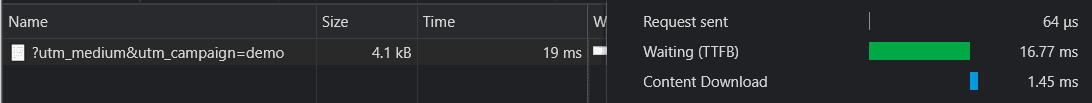

Here is a more detailed breakdown of the 91-ms. page-response time:

What's even more impressive is that making the request from your browser leads to faster performance: less than 17 ms., almost 12 times faster than Google's recommendation.

You can kick it up a notch and add more illuminating personalization conditions to the mix, for example:

- Geography: The visitor's country.

- Goals: The determination of whether a Sitecore goal has been triggered during the current visit.

- Pattern match: The determination of whether the visit matches a profile pattern.

What’s more, you can target anything with the HTTP request (query-string parameters and cookies) along with conditions that pertain to the visit and visitor, e.g., the visit number, goals, page events, profile scores, and pattern matches, all of which are on this list of the supported conditions. Uniform is constantly adding support for other conditions. You can also make custom conditions work by means of Uniform’s Optimize API. For details on the procedure, see the product documentation. The 10-second demo below shows edge-side personalization with those conditions at work. On the left is the TTFB; on the right, the personalization in action. Note also the personalized hero component. The visitor navigating to other pages causes an update of that individual's profile, creating the context that evokes different personalization variants.

Limitations

Below are a few Q&As on demos like the one above.

How does behavioral customization work with decoupled personalization? Does Uniform support Sitecore profiling and pattern matching?

Pattern-match conditions work in the same way for both the Uniform tracker and the origin-based Sitecore tracker. Because it implements profile scoring and pattern matching, the Uniform tracker runs on the visitor's browser, eliminating the need for the CD instance to handle the request. The demo above is an example of pattern-match personalization.

How do I personalize according to historical events?

The Uniform tracker collects visitor activity, storing it in the browser for personalization. You can also populate the tracker state with data captured outside the Uniform tracker, such as data from aDMP or CDP. Moreover, to personalize for previous visitors, you can load historical data from Sitecore xDB into the tracker with an endpoint in Uniform for Sitecore .

How about analytics?

The Uniform tracker’s dispatch feature sends data to external systems during capture. The Uniform connector can send the activity in the Sitecore Experience Profile to Google Analytics with no custom code. For customers who would like to continue to collect visitor data in xDB, dispatch is the answer. In other words, the Uniform tracker API supports creation of custom dispatchers, meaning that you can send tracker data anywhere you prefer.

Does Uniform support A/B testing?

Yes, but not the Sitecore version. The Uniform version, which is simpler and more natural and usable, is the fruit from many years of collaboration with customers to design and implement personalization, as well as from a load of customer feedback. Plus, our version is automatically integrated with Google Analytics, a top customer preference.

What about delivery through CDNs?

Even though Uniform delivers experiences through Akamai and Cloudflare CDNs, our approach works with any CDN that supports programmatic control over the handling of HTTP requests.

Summary

With Uniform for Sitecore, our flagship Sitecore product, Sitecore customers can offload all their Sitecore CD traffic to global CDNs without major changes to their system. Available for use is decoupled personalization—with or without Jamstack. Being compatible with Sitecore 9+, decoupled personalization works with both XM and XP topologies. You need not change your site’s presentation technology: MVC, SXA, or JSS. We support them all. To see Uniform for Sitecore in operation, schedule a free demo with us.

.png&w=1080&q=90)

.png&w=1080&q=90)

.png&w=1080&q=90)